Ronen Bekerman is an industry leader in Architectural Visualization who hosts in-depth tutorials on his specialist blog. Architizer is pleased to present a selection of these guides, written by some of the world’s best rendering artists.

This August marked my blog’s seventh year running. I learned a lot during that time and keep on learning as I put myself in a position of always having my finger on the pulse of the architectural visualization field. Starting in architectural visualization back in 2002, I’ve made my way as a solo artist and recently partnered up to establish The Craft. I took time this month to reflect on that which we do on a daily basis.

At times, it was hard to see the forest for the trees.

But with time also comes clarity.

I love the fact I can refer back to the blog and to the great insight shared on it by the world’s best architectural visualization artists. Sometimes, we’re so caught up in the daily routine that we don’t see the bigger picture as much as we should, so I’ve taken a snapshot of what architectural visualization looks like through the lens of my blog to see what we do, how we do it and where we are heading.

For a long time, I kept a dual approach to architectural visualization. To be more precise, I think I refused to pick one so that I’m not missing out on the benefits the other has to offer. Recently, considering technological advances, the market and some assumption about the future, I’m gravitating toward one approach in a meaningful way.

Ronen Bekerman’s work during SOA’s Peter Guthrie Lighting Masterclass; © Ronen Bekerman

In a nutshell, we can segment the architectural visualization field into two approaches. There’s pure 3D photorealistic and there’s non-photorealistic (NPR), which is a much broader approach in terms of the various styles showcased within it.

What’s so important in defining and discussing these approaches, you might ask. There are many successful and world-renowned artists who take more than one approach to architectural visualization, so being good at it isn’t limited to one or the other.

Even so, each approach relies heavily on a specific technology or skill set and offers varying flexibility in the work process. Considering this is a business with budgets and time constraints, you can see how your choice of approach is important. Even more so in light of recent technological advances and market expectations driven by consumers in different markets, as well.

So, let’s explore “A Photographic Approach to Architectural Visualization” through the lens of Berlin-based studio xoio.

Xoio has been established in 2006 by Peter Stulz and Lasse Rode. It is specialized in high-quality emotive architecture and product visualization. The team at xoio consists of people who have an architecture and design background. That provides a strong understanding of creative processes and the ability to give support in questions of design.

Here, Lasse shares insights into his photographic approach to rendering. This article was first published in March 2013 so refers to technology and software available at that time. The underlying concepts still hold up.

Introduction

I am currently working on a set of images for a commercial project that somehow have a painterly feel to them, but the rules described in the following article can still apply. In our (beloved) chosen profession as visualization artists, we have to put things in the most comfortable setting as possible, which brings into play various other aspects besides just aiming for “photorealism.”

This is why this article ended up being more about setting up an image in general, or the rules of thumb I use most of the time. They are not complete and, of course, there might be better ways. Some of them may also have been described in the past by classic painters, graphic designers, photographers and so on. So consider this more of an eclectic, basic compendium of the things I take care of in my daily work.

One important thing — and if you want a clear rule — is the thing that sets a still image apart from an animation! The still has to tell a story in a single static frame, while an animation has the luxury of many more frames and the movement to do that. Therefore, it is very important for the still to be easily readable and come to its point very precisely.

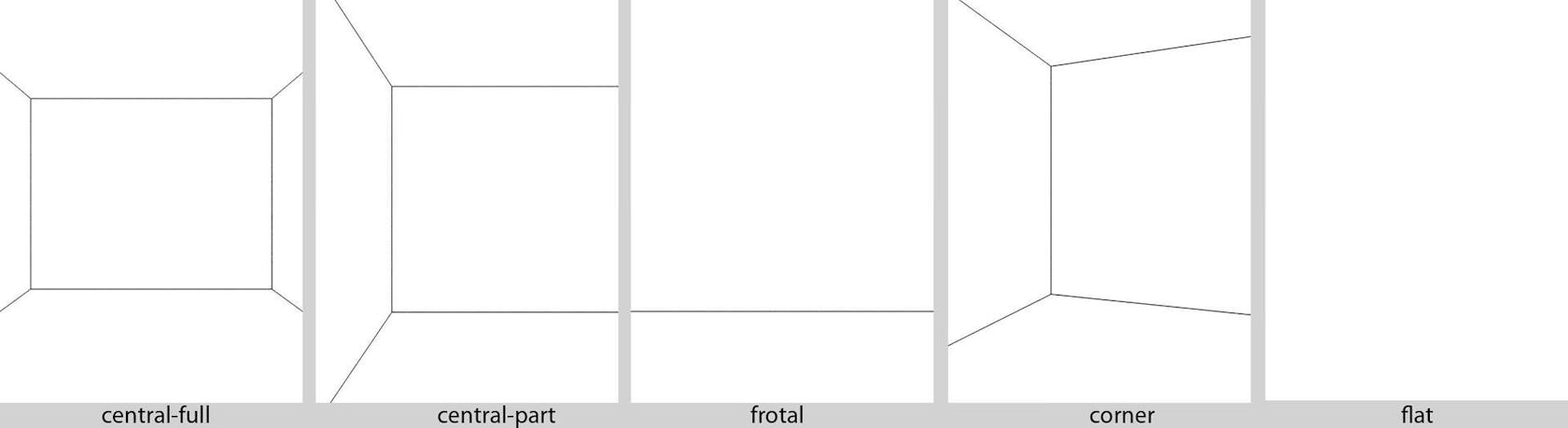

Composition

Finding a good composition is one of the hardest parts and also the most important one. For me it helps a lot to start with classic compositions like a central perspective and so on.

The key here is to keep it as clear and readable as possible. Reducing the perspective lines makes you understand the image quicker.

Of course, this goes for interior renders as well as for exterior renders, just inverted sometimes (see corner-perspective, which is referred to as a two-point perspective).

Most of the time I work with photographic references, and with some of my personal projects I try to reproduce photographs to a certain degree … some more than others. Of course, when reproducing a photograph my personal creative input into it is minimal (I’m trying to match the photo), but I learn something from it every time that can be used on actual live client projects. Basically, that is what it is all about when doing personal projects — testing and developing one’s skills in an environment free of the usual production constraints like time, money and others’ comments. You can then carry the things you learned along to other projects if they have value.

After getting the composition right, I also pay attention to the “depth composition”: the way I’m layering the image with the classic foreground / middle ground / background scheme. This is a very simple rule, but it really helps in making your point with a still by taking advantage of our depth perception. You should always try to work with Depth of Field in various degrees, which is one of the most effective tools you have to enhance this effect (other methods use color and brightness as depth cues).

As you can see in the schematics below, it is very simple to achieve but also very effective.

You can see in the images below how this effect comes into play, helping you focus on the part of the image the creator wishes you to focus on. Of course, this is more obvious in short-range closeup shots, but this can be a strong tool when applied smartly on large-scale scenes, too.

You will also notice that if you try to apply the famous “Rule of Thirds” onto your images, they become somewhat balanced composition-wise. Just try to align the main parts of your image with the grid and see how it might help you.

Don’t think of it as simply placing the obvious “lines” in the scene, but more about the key “story” elements that you place in specific areas based on such a grid to balance things out. Applied on two of the images you have just seen, this would look like this …

Many cameras have this grid implemented in the visor as a feature. Photoshop also shows this grid when you use the crop tool. 3dsmax also has these grid options in safe-view mode … It absolutely makes sense.

Focal Length

Try to avoid using a focal length shorter than 30 millimeters. I often use something between 35 to 55 millimeters.

Wide angle is being criminally abused in ArchViz because we are always tasked to show as much as possible in a single image. I’ll remind you that we do have super powers inside the CG Matrix, and so we can see through walls by cutting them, hiding them or using camera clip planes. This will help avoid the distortions of a wide-angle focal length by allowing us to go back and narrow it down.

Regardless of that, I think it is important to think about how we — as humans — view the world. I find it really important to try and come as close as possible to what you would see in reality.

Each human eye has a viewing angle anywhere between 120 and 180 degrees. Combined, it amounts to 130 degrees and very similar to what a fish-eye lens can offer, but we can’t actually “see” all this field. Our central field of view is what counts and amounts to 40 to 60 degrees, which is the equivalent of the 50-millimeter “normal” lens, considered as having the best resemblance to what we humans see. You can learn a lot more about this on Cameras vs. the Human Eye over at the Cambridge in Color website (a great source of insight on all things related to photography).

Modeling and Shading

Considering modeling, it is more of a general statement that I want to make here, rather than a technical manual. You really should aim to get your models as accurate as possible. Get the general proportions and the fine details right, and that will do the job.

You should really force yourself to maintain a certain minimum level of detailing, but do consider the visibility of such details in the final images you will make. Time permitting, I would always suggest doing as much detail as possible so you can later explore any view you like without any limitation.

As you can see in the screenshot below, the window has been modeled to a level that makes sense regarding its distance from the camera and the resolution at which the image has been rendered.

The same logic also applies to the shading and materials. You can dramatically enhance your images by using the right textures.

The real world is a highly reflective place! Pure diffuse is pretty hard to come by actually, whereas in the CG world, it is very easy to diffuse your scenes to death.

Most things reflect light in a certain way, and the biggest difference is related to the surface glossiness. Considering this — all of your materials should have some level of reflection and have maps assigned to their glossiness and reflection slots. Don’t be lazy on this one. It can have a great impact on the final look.

I can recommend reading Bertrand Benoit’s “Materialism” and “Snow White’s Coffin” articles on his blog regarding this topic. Great useful tips there.

Natural Lighting

There is a lot that has been said about this topic, but let me point you to Peter Guthrie’s HDRI-related posts as well as the HDR Image Based Lighting 3D Scene Setup article by Ronen Bekerman, which are great resources on this subject, focusing on the use of HDR images as the base for natural-looking lighting with or without the combination of other light sources.

One thing that is important to address, though, is the issue of controlling color and lighting angle/diffuse.

In the image below, for example, it worked best using three planes slightly slanted, deformed by a noise modifier with a light-material applied to simulate the light I wanted to reconstruct from the original photo. Only this way, it was possible to get the really subtle shadow-gradient on the wall and the round part behind the staircase.

Color Balance

To get a balanced feel for the whites in a scene, you should take care that only the very brightest spot in your image goes up to almost complete white. The rest of the whites in your render should range around 190 to 220. You also should try to let the darker tones play a role in your image. Always remember to make it look balanced and not have too many overexposed or underexposed areas.

The main thing that is really important to pay attention to here and understand, besides the world being reflective, is that the world is also a very gray place. That might sound a bit depressing, but it is not meant to come across like that … It is just that it is all shades of gray (some say 50).

As you can see above, the only thing that is really white in this scene is the overexposed window. Everything else (brown elements aside) are shaded between black and light-gray. Even the white walls are not really white. Of course, this image is somewhat dark to start with, but you still read the walls as being white.

The distribution of dark and light areas in your image is a very relative thing, too. You should keep that in mind. The following image shows quite well what happens with the same gray placed in different areas of the image. Notice the area within the gradient … The gray square looks like having a gradient itself! But that is not the case. The color is RGB = 110,110,110 everywhere.

To make your image consistent in colors, it should have a clear concept of coloring. The easiest way of course is having all shades of gray and one key color, but there is so much more to that I can’t really go into within the scope of this article.

I made this series of Casa Barragan some time ago. Each one has its own coloring, and yet they all feel as being part of one series. Considering Barragan’s architecture and use of color, taking it into the coloring of these renders, has allowed me to make them all be part of the same set color-wise.

Rendering

There are a lot of resources on this topic to be found on the web and elsewhere, and I am sure there are a lot people around that know more about it than I do, but I would like to share some of my personal insight on this topic. There is one thing that I really find important for an image if you are aiming at photorealism, and that is GRAIN.

Don’t fear it! A slight and subtle grain in your image might just make it look more PHOTO-real. The super clean smooth look sometimes is the one to scream CG out loud. Ironically, using lower render settings can help you with this grain. Let me share with you an image by Sarah Dorweiler. You might have come across her work on Ronen Bekerman’s forum. She is a 3D visualizer based in Berlin.

She told me her main tools are SketchUp for modeling and Indigo Render for … well … rendering. It has an unbiased render engine, which is also worth keeping an eye on. The main advantage in rendering unbiased is the clarity in the details, and the subtle grain these engines produce due to the way they work. You start with lots of noise, and it clears up during the process. You rarely never get a 100-percent converged result — so you always have some noise.

You can feel it in this image: Not only that it is a beautiful picture, it also has this “gritty” feel to it, very haptic.

I’ll use the image with the three chairs as a testing environment.

I have applied a standard light-gray shader to everything in the scene. This scene is actually very stressful for the GI due to the very few openings the light could enter the room … It is just the small windows in the front and a large door on the left, so light is being bounced a lot here. I used an HDRI applied to a dome for lighting this scene. Light subdivs set to 64.

I did these tests at a resolution of 800 by 541 pixels on a 4-core i7 (the slowest machine in our studio). First, I give the standard Irradiance Map / Light cache technique a try. As you can see in the lefthand image below, rendering with default settings, the outcome lacks a lot in quality. There are lots of splotches everywhere, the geometry and details are almost lost.

When switching on the filtering in the LC and the detail-enhancement option in the Irradiance Map, the image (below right) looks better. It still has some issues with splotches. It also took a long time to render.

In one version, I did crank up the Irradiance Map settings; made it finer and used more hemispheric subdivs. It is better but still has ugly artifacts near the windows. The rendering time went up to a ridiculous 4.5 hours. One solution could be adding some skylight portals, but this would not contribute to faster rendering either.

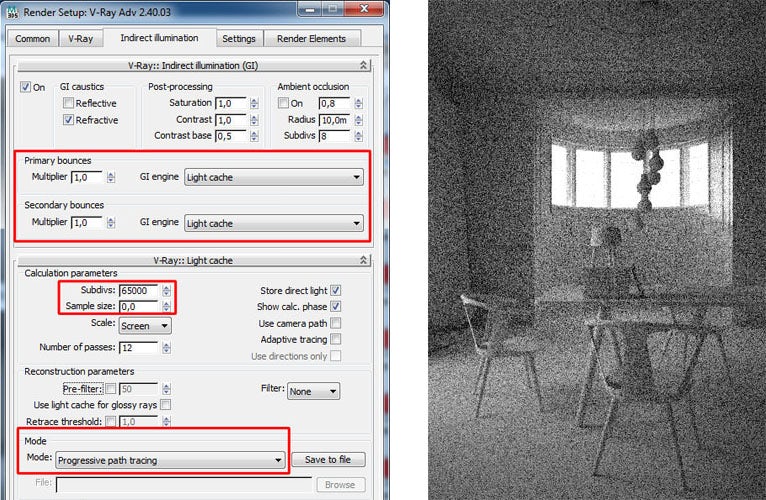

V-Ray also has the ability to work in an unbiased mode. You have to set both GI engines to Light cache, set the subdivs to the max (65000) and the sample size to 0. Change the mode to Progressive Path Tracing (PPT). The rendering process would look like the righthand image below (similar to other unbiased renderings).

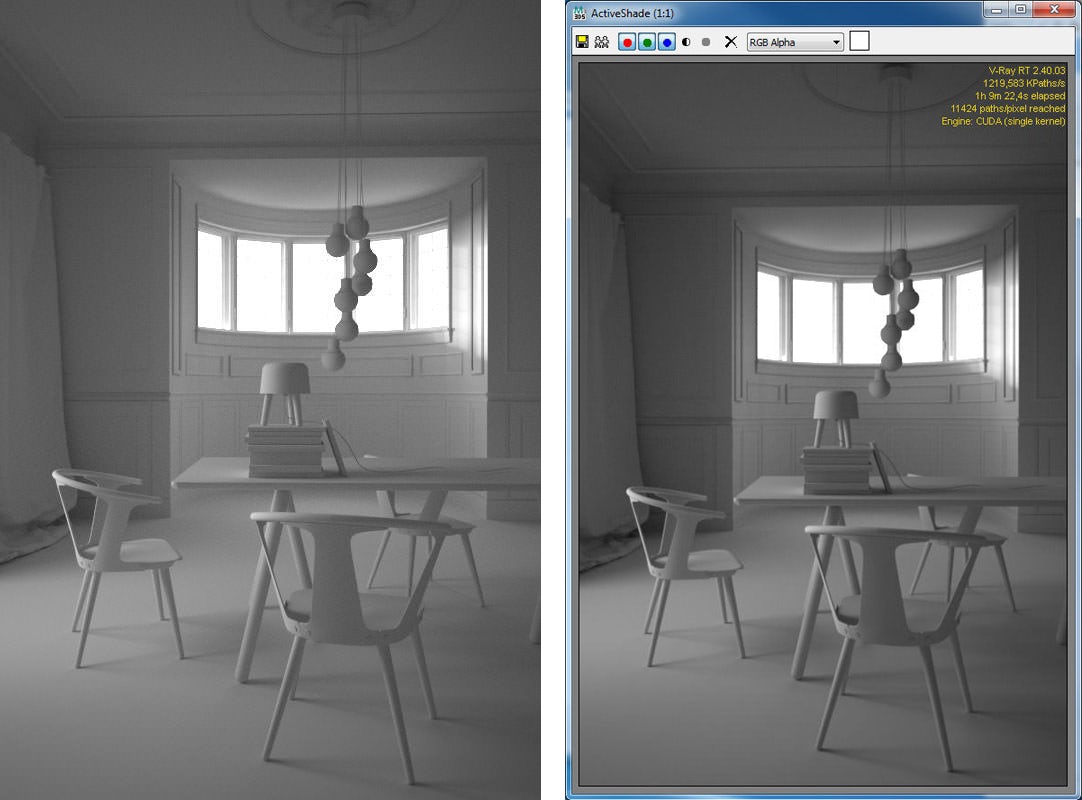

After running through, it would look like the below-left image. This took very long to render — eight hours — but in the end, it looks quite decent, I think. It has the right amount of grain, and the details come out looking good! When talking about unbiased rendering, we should not forget the latest GPU technologies. You might have heard of Octane Render, which has had a great evolution over recent years. I have tested this, as well, and it really looks very promising.

In the case below-right I tested the scene with V-Ray RT with the CUDA engine. I have a GTX 660 Ti installed in my current workstation, which sports 1344 CUDA processors. As you can see, the result after about one hour of rendering is similar to the eight-hour PPT result; even more contrasted, which actually looks even better.

Lastly, I want to give full Brute Force GI a chance, as this was the technique I used for rendering the images of the &Tradition set. You might have categorized brute force as quite a slow and time-consuming technique. Well, this can be — but if you fully play out V-Ray’s adaptive DMC sampler, it can be quite fast!

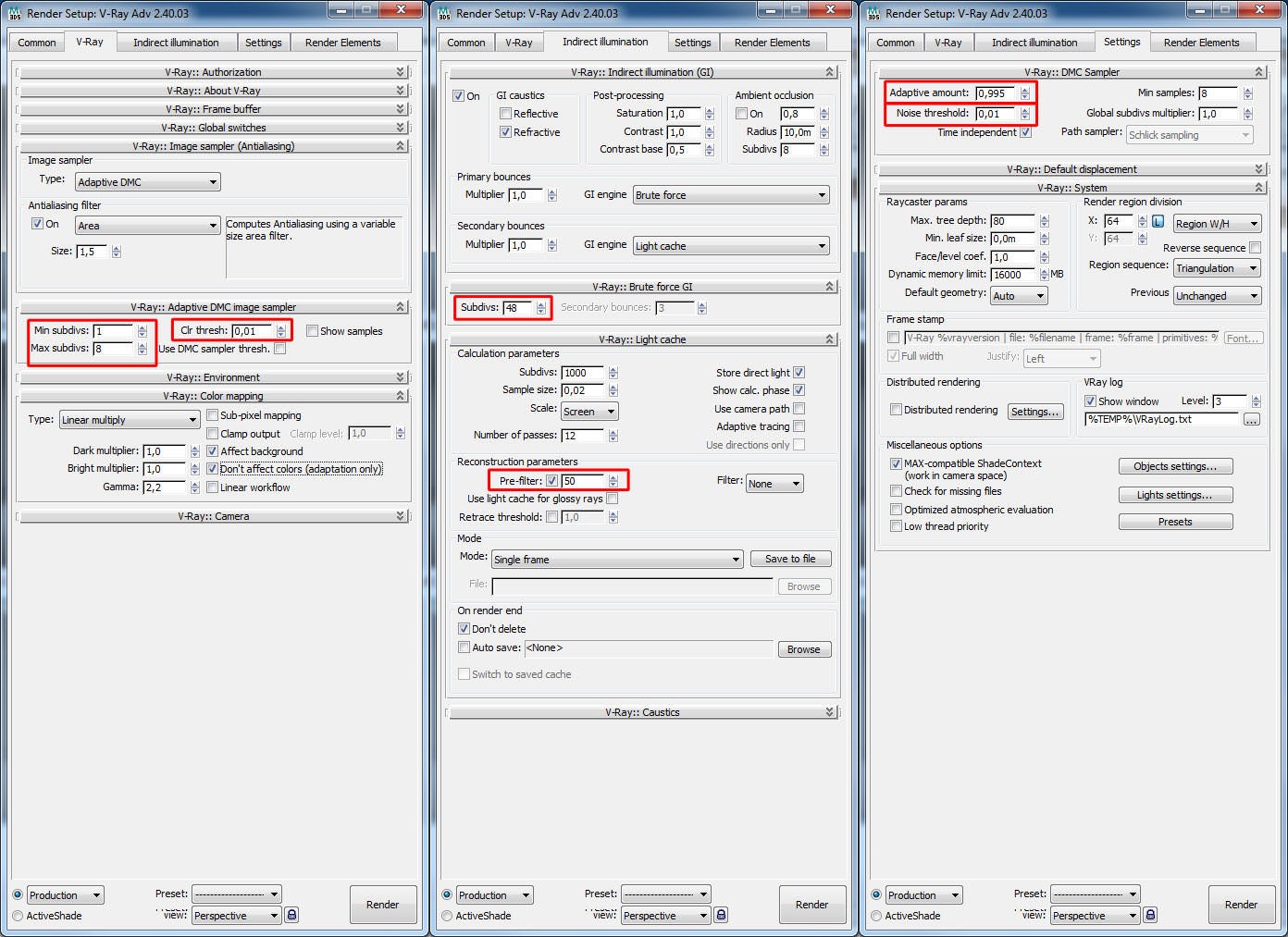

It works quite well for me setting the adaptive amount of the DMC sampler at 0.995 and the subdivs from 1 to 8 (see the full settings below). Leave the color threshold at 0.01 for the beginning — the noise threshold in the settings tab is used to control the noise. Don’t forget to pre-filter the Light cache, as this will give nice details.

The following images show the Brute Force output with different noise thresholds from 0.5 down to 0.001. You can see a very large difference in noisiness, with the difference in render time ranging from nine minutes (worst quality) to 16 minutes (best quality).

From left to right: noise threshold 0.5, 0.1 and 0.01

From left to right: noise threshold 0.001, 0.002 and 0.005

Conclusion

Of course you can use whatever render engine you like, and in the end, it all depends on how you manage your work and your deadlines. 3D artists working with traditional (non GPU-based) unbiased engines are able to get their images out in time just as 3D artists who use faster methods. It all comes down to how you organize your process.

I prefer the Brute Force workflow because it can be faster than an IM/LC setup or at least on par and delivers better-quality results. It does have its traps, though, where render times explode! So be careful! An IM/LC setup would be a good place to start, perhaps … to be on the safe side. You can still tweak it to be fast if you are ok with some quality-issues. This is one of the big strengths V-Ray has to offer.

I’m very much looking forward to the enhancements of GPU-based technologies. My current graphics card has 3GB of VRAM, which is quite good but still has some room for improvement. Compared to traditional unbiased rendering setups, it offers great improvements in render-times. Compared to biased rendering with V-Ray, you can already get good and faster results.

There is no final conclusion on this because we always strive for better quality, and render-times are relative to what you have on the table. A render farm surely stands more to gain from any kind of render-time improvement … We 3D artists aren’t robots, so we do have down times and can render as long as needed. Other times we just render it out dirty (no matter what render engine is used).

Postwork / Photoshop

There is this thing called the Post Production stage, but in my opinion it also plays a big role in the actual Production process. There are visualizers who actually do the main part of their work in this stage. So let’s not sell it short!

The path I choose to follow depends on the goal I set at the beginning and the timeframe, too. Producing images for a competition is a different ballgame than producing high-end interior visualizations for a real estate marketing print ad. It simply demands completely different things from the process of making these images and from the images themselves.

In the &Tradition project, the Post Process stage was very minimal. Most of what you see came out with the render. A photographer does not have the power of CG in his hands as we do, and so most times their postproduction stage is also minimal (at least when considering architectural photography … Other fields may display extensive post-processing). In our field today, most of the render engines are able to produce photorealistic images.

You must keep in mind that it depends on how you tap into it and take advantage of the engine’s full potential. If you take on the challenge and work on all aspects of your scene — modeling, composition, lighting, textures, materials, colors and styling — then you are at the point where your raw render is the final result or very near. You’ll be left with minimal post-processing, just the same as a photographer.

A small tip I’d like to share about those final tweaks to your image is this: Aim to subtract values rather than adding to them. So for example, if you want something to be more yellow, reduce the blues. For me, that works very well and does wonders for your overall result. This sounds a bit esoteric, I know, but I think the reason for this is that you avoid overexposure of certain areas and disturbing the overall balance of your image by– keeping the colors at lower levels.

So, finally, I hope I did not bore you with my bag of half-knowledge and esoteric ideas of how images work and do not work. At some parts it may sound somewhat banal, –but that sometimes is how everything comes together. An addition of simple things. I’m a big fan of the KISS principle (Keep It Simple, Stupid) and “less is more!”

This is just a part of my view on things 3D-related. I hope you enjoyed digging through this!

Kind regards,

Lasse.

This article was first published on Ronen Bekerman Architectural Visualization Blog in March 2013. Enjoy this article? Check out the other features our series on “The Art of Rendering”:

Methanoia Reveals the Story Behind Architecture’s Most Striking Visualizations

When Architectural Visualization Gets It Right: Victor Enrich’s Surreal Art

7 Magical Demonstrations of Hyper-Real Environments

Alex Hogrefe Creates Stunning Architectural Visualizations Using Only SketchUp and Photoshop

How Technology Will Revolutionize Architectural Representations

Architizer is building tech tools to help power your practice: Click here to sign up now. Are you a manufacturer looking to connect with architects? Click here.